What happens when you combine an NVIDIA RTX 3060, an open-weight 14-billion parameter LLM, and a global network of amateur radio operators? You get a surprisingly perfect example of edge computing.

By day, I help enterprises scale complex architectures as a Red Hat OpenShift Technical Account Manager. But like many in our industry, my passion for problem-solving does not stop when I log off. A colleague recently suggested I stop keeping my side projects to myself and share what I build in my spare time. So, let me show you how I am using small, locally hosted AI to solve real-world problems right here in my amateur radio shack.

The Context: Open Source Digital Radio

Amateur Radio is currently transitioning from analogue to digital. Much of the digital voice landscape has historically been dominated by locked-down proprietary codecs. FreeDV changes that; It is a suite of digital voice modes built entirely on open-source software. No secrets, no proprietary lock-in; just a community free to experiment.

The Problem: Finding the Signal in the Noise

When using FreeDV, operators rely on live data APIs to see who is transmitting. While standard tools are great, I wanted a way to instantly identify opportunities to communicate with rare, distant stations (known as ‘DX’ in the community) without manually parsing through an hour of rolling logs.

The Solution: FreeDV Reporter+

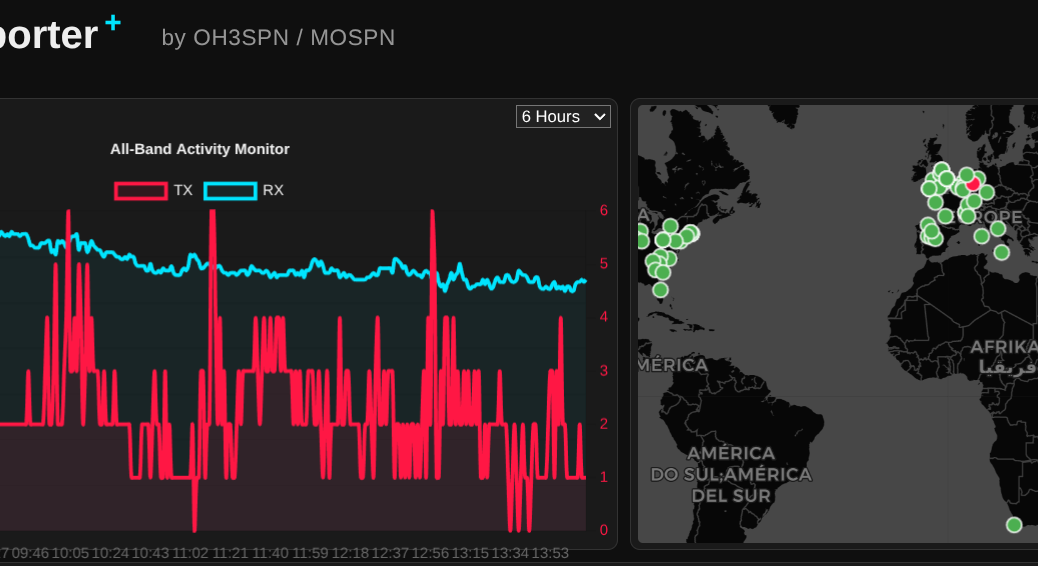

I built a free, web-based tool called FreeDV Reporter+. It connects to the FreeDV Socket.IO API to map live activity, but the real magic is the AI integration.

Every hour, the application analyses the live data stream, condenses the logs, and generates a human-readable summary of the best opportunities on the bands.

Under the Hood: Lean AI on Consumer Hardware

The application is a lightweight Python Flask application, containerised then deployed using Podman.

Instead of relying on expensive cloud APIs, the AI summarisation runs locally on a consumer-grade NVIDIA RTX 3060 GPU, using the qwen3-14b model via the OpenWebUI API. This is a perfect example of how a relatively small (14b) parameter model can provide real, tangible value by assessing live data right at the source.

Scaling from the Shack to the Enterprise Edge

Processing data near the physical location of the user is the fundamental definition of Edge computing. While my setup is a grassroots project, the architectural principles apply directly to modern enterprise challenges.

If an organisation wanted to scale an architecture like FreeDV Reporter+ across thousands of locations (perhaps telecom base stations, logistics networks or electrical substations), they would need to deploy and manage these AI applications consistently. This is exactly where Red Hat OpenShift comes in.

OpenShift extends Kubernetes to the edge, allowing teams to manage remote deployments with the same operational consistency as their core data centres. By utilising Red Hat OpenShift AI, teams can serve and monitor right-sized models securely at the edge, reducing latency and preserving bandwidth.

Crucially, OpenShift AI incorporates vLLM to manage the costs and performance of inferencing. vLLM is an open-source framework that drastically increases inference throughput and optimises memory usage. When deploying models to constrained edge environments where compute resources are strictly limited, this level of efficiency is absolutely vital to keeping operations lean and responsive.

Furthermore, with tools like RHEL AI and InstructLab, developers can easily fine-tune models for more specialist purposes. You get enterprise-grade accuracy for specific tasks without the massive compute costs associated with general-purpose behemoths.

The Takeaway

You do not need massive data centres to extract real value from AI. By strategically deploying small, fine-tuned LLMs at the edge, you can deliver real-time insights securely and efficiently. Whether you are hunting for rare radio signals across the globe or optimising a production line, the edge is where AI truly gets to work.

If you are a radio amateur, I would love for you to try out FreeDV Reporter+. And if you are interested in running robust AI workloads at the edge, let us connect and talk about OpenShift!